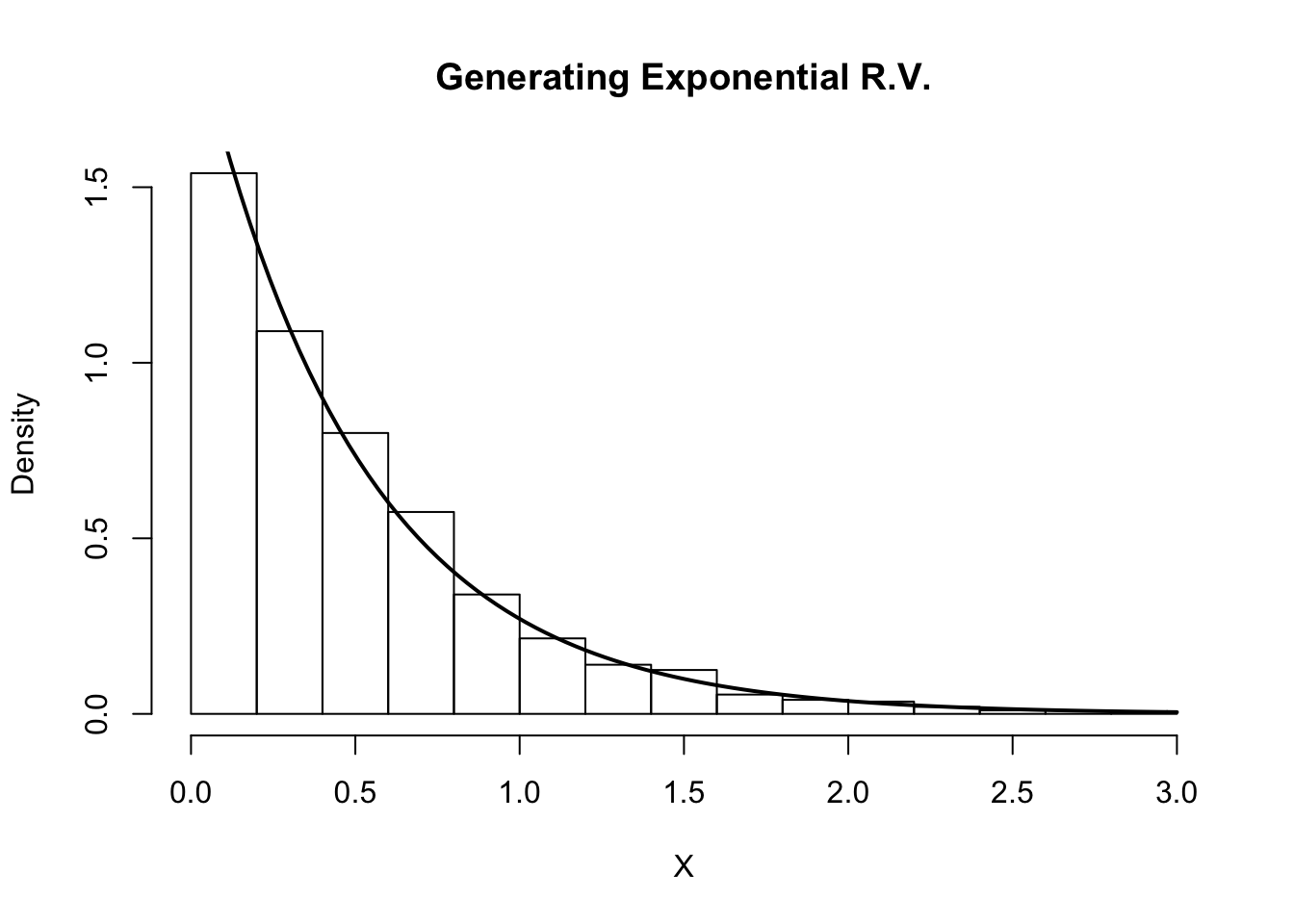

To capture a multiplicative (exponential) trend we make a minor adjustment in the above equations: Similar to SES, and are constrained to 0-1 with higher values giving faster learning and lower values providing slower learning. The second equation means that the trend at time t is a weighted average of the trend in the previous period and the more recent information on the change in the level. In these equations, the first means that the level at time t is a weighted average of the actual value at time t and the level in the previous period, adjusted for trend. The level () and trend () are updated through a pair of updating equations, which is where you see the presence of the two smoothing paramters: The k-step-ahead forecast is given by combining the level estimate at time t () and the trend estimate (which in this example is assumbed additive) at time t (). The methodology for predictions using data with a trend (Holt’s Method) uses the following equation with observations. For Holt’s method, the prediction will be a line of some non-zero slope that extends from the time step after the last collected data point onwards. Holt’s Method makes predictions for data with a trend using two smoothing parameters, and, which correspond to the level and trend components, respectively. An alternative method to apply exponential smoothing while capturing trend in the data is to use Holt’s Method. In the last section we illustrated how you can remove the trend with differencing and then perform SES. actuals for the test data set" ) gridExtra :: grid.arrange ( p1, p2, nrow = 1 )Īs mentioned and observed in the previous section, SES does not perform well with data that has a long-term trend. The following table illustrates how weighting changes based on the parameter: When is closer to 1 we consider this fast learning because the algorithm gives more weight to the most recent observation therefore, recent changes in the data will have a bigger impact on forecasted values. When is closer to 0 we consider this slow learning because the algorithm gives historical data more weight. In practice, equal to 0.1-0.2 tends to perform quite well but we’ll demonstrate shortly how to tune this parameter. In both equations we can see that the most weight is placed on the most recent observation. It is also common to come to use the component form of this model, which uses the following set of equations. For a data set with observations, we calculate our predicted value,, which will be based on through as follows: The weight of each observation is determined through the use of a smoothing parameter, which we will denote. This section will illustrate why.įor exponential smoothing, we weigh the recent observations more heavily than older observations. The key point to remember is that SES is suitable for data with no trend or seasonal pattern. The simplest of the exponentially smoothing methods is called “simple exponential smoothing” (SES). # create training and validation of the Google stock data ain <- window ( goog, end = 900 ) goog.test <- window ( goog, start = 901 ) # create training and validation of the AirPassengers data ain <- window ( qcement, end = c ( 2012, 4 )) qcement.test <- window ( qcement, start = c ( 2013, 1 )) fpp2 will automatically load the forecast package (among others), which provides many of the key forecasting functions used throughout. This tutorial primarily uses the fpp2 package. Exercises: Practice what you’ve learned.Damped Trend Methods: Technique for trends that are believed to become more conservative or “flat-line” over time.Holt-Winters Seasonal Method: Technique for data with trend and seasonality.Holt’s Method: Technique for data with trend but no seasonality.Simple Exponential Smoothing: Technique for data with no trend or seasonality.Replication Requirements: What you’ll need to reproduce the analysis in this tutorial.Consequently, exponentially smoothing is a great forecasting tool to have and this tutorial will walk you through the basics. Exponential smoothing methods are intuitive, computationally efficient, and generally applicable to a wide range of time series. Where niave forecasting places 100% weight on the most recent observation and moving averages place equal weight on k values, exponential smoothing allows for weighted averages where greater weight can be placed on recent observations and lesser weight on older observations. Exponential forecasting is another smoothing method and has been around since the 1950s.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed